Python의 스크랩(개발자를 위한 사용법)

Effectiveness and efficiency are critical in the fields of online scraping and document generation. A smooth integration of strong tools and frameworks is necessary for extracting data from websites and its subsequent conversion into documents of a professional caliber.

Here comes Scrapy, a web scraping framework in Python, and IronPDF, two formidable libraries that work together to optimize the extraction of online data and the creation of dynamic PDFs.

Developers can now effortlessly browse the complex web and quickly extract structured data with precision and speed thanks to Scrapy in Python, a top web crawling and scraping library. With its robust XPath and CSS selectors and asynchronous architecture, it's the ideal option for scraping jobs of any complexity.

Conversely, IronPDF is a powerful .NET library that supports programmatic creation, editing, and manipulation of PDF documents. IronPDF gives developers a complete solution for producing dynamic and aesthetically pleasing PDF documents with its powerful PDF creation tools, which include HTML to PDF conversion and PDF editing capabilities.

This post will take you on a tour of the smooth integration of Scrapy Python with IronPDF and show you how this dynamic pair transforms the way that web scraping and document creation are done. We'll show how these two libraries work together to ease complex jobs and speed up development workflows, from scraping data from the web with Scrapy to dynamically generating PDF reports with IronPDF.

Come explore the possibilities in web scraping and document generation as we use IronPDF to fully utilize Scrapy.

Asynchronous Architecture

The asynchronous architecture used by Scrapy enables the processing of several requests at once. This leads to increased efficiency and faster web scraping speeds, particularly when working with complicated websites or big amounts of data.

Sturdy Crawl Management

Scrapy has strong Scrapy crawl process management features, such as automated URL filtering, configurable request scheduling, and integrated robots.txt directive handling. The crawl behavior can be adjusted by developers to meet their own needs and guarantee adherence to website guidelines.

Selectors for XPath and CSS

Scrapy allows users to navigate and pick items within HTML pages using selectors for XPath and CSS selectors. This adaptability makes data extraction more precise and dependable by enabling developers to precisely target particular elements or patterns on a web page.

Item Pipeline

Developers can specify reusable components for processing scraped data before exporting or storing it using Scrapy's item pipeline. By performing operations like cleaning, validation, transformation, and deduplication, developers can guarantee the accuracy and consistency of the data that has been extracted.

Built-in Middleware

A number of middleware components that are pre-installed in Scrapy offer features like automatic cookie handling, request throttling, user-agent rotation, and proxy rotation. These middleware elements are simply configurable and customizable to improve scraping efficiency and address typical issues.

Extensible Architecture

By creating custom middleware, extensions, and pipelines, developers can further personalize and expand the capabilities of Scrapy thanks to its modular and extensible architecture. Because of its adaptability, developers may easily include Scrapy in their current processes and modify it to meet their unique scraping needs.

Create and Config Scrapy in Python

Install Scrapy

Install Scrapy using pip by running the following command:

pip install scrapypip install scrapyDefine a Spider

To define your spider, create a new Python file (such as example.py) under the spiders/ directory. An illustration of a basic spider that extracts from a URL is provided here:

import scrapy

class QuotesSpider(scrapy.Spider):

# Name of the spider

name = 'quotes'

# Starting URL

start_urls = ['http://quotes.toscrape.com']

def parse(self, response):

# Iterate through each quote block in the response

for quote in response.css('div.quote'):

# Extract and yield quote details

yield {

'text': quote.css('span.text::text').get(),

'author': quote.css('span small.author::text').get(),

'tags': quote.css('div.tags a.tag::text').getall(),

}

# Identify and follow the next page link

next_page = response.css('li.next a::attr(href)').get()

if next_page is not None:

yield response.follow(next_page, self.parse)import scrapy

class QuotesSpider(scrapy.Spider):

# Name of the spider

name = 'quotes'

# Starting URL

start_urls = ['http://quotes.toscrape.com']

def parse(self, response):

# Iterate through each quote block in the response

for quote in response.css('div.quote'):

# Extract and yield quote details

yield {

'text': quote.css('span.text::text').get(),

'author': quote.css('span small.author::text').get(),

'tags': quote.css('div.tags a.tag::text').getall(),

}

# Identify and follow the next page link

next_page = response.css('li.next a::attr(href)').get()

if next_page is not None:

yield response.follow(next_page, self.parse)Configure Settings

To set up the Scrapy project parameters like user-agent, download delays, and pipelines, edit the settings.py file. This is an illustration of how to change the user-agent and make the pipelines functional:

# Obey robots.txt rules

ROBOTSTXT_OBEY = True

# Set user-agent

USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.3'

# Configure pipelines

ITEM_PIPELINES = {

'myproject.pipelines.MyPipeline': 300,

}# Obey robots.txt rules

ROBOTSTXT_OBEY = True

# Set user-agent

USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.3'

# Configure pipelines

ITEM_PIPELINES = {

'myproject.pipelines.MyPipeline': 300,

}Getting started

Starting with Scrapy and IronPDF requires combining Scrapy's robust web scraping skills with IronPDF's dynamic PDF production features. I'll walk you through the steps of setting up a Scrapy project below so that you can extract data from websites and use IronPDF to create a PDF document containing the data.

What is IronPDF?

IronPDF is a powerful .NET library for creating, editing, and altering PDF documents programmatically in C#, VB.NET, and other .NET languages. Since it gives developers a wide feature set for dynamically creating high-quality PDFs, it is a popular choice for many programs.

Features of IronPDF

PDF Generation: Using IronPDF, programmers can create new PDF documents or convert existing HTML elements such as tags, text, images, and other file formats into PDFs. This feature is very useful for creating reports, invoices, receipts, and other documents dynamically.

HTML to PDF Conversion: IronPDF makes it simple for developers to transform HTML documents, including styles from JavaScript and CSS, into PDF files. This enables the creation of PDFs from web pages, dynamically generated content and HTML templates.

Modification and Editing of PDF Documents: IronPDF provides a comprehensive set of functionality for modifying and altering pre-existing PDF documents. Developers can merge several PDF files, separate them into separate documents, remove pages, and add bookmarks, annotations, and watermarks, among other features, to customize PDFs to their requirements.

How to install IronPDF

After making sure Python is installed on your computer, use pip to install IronPDF.

pip install ironpdf

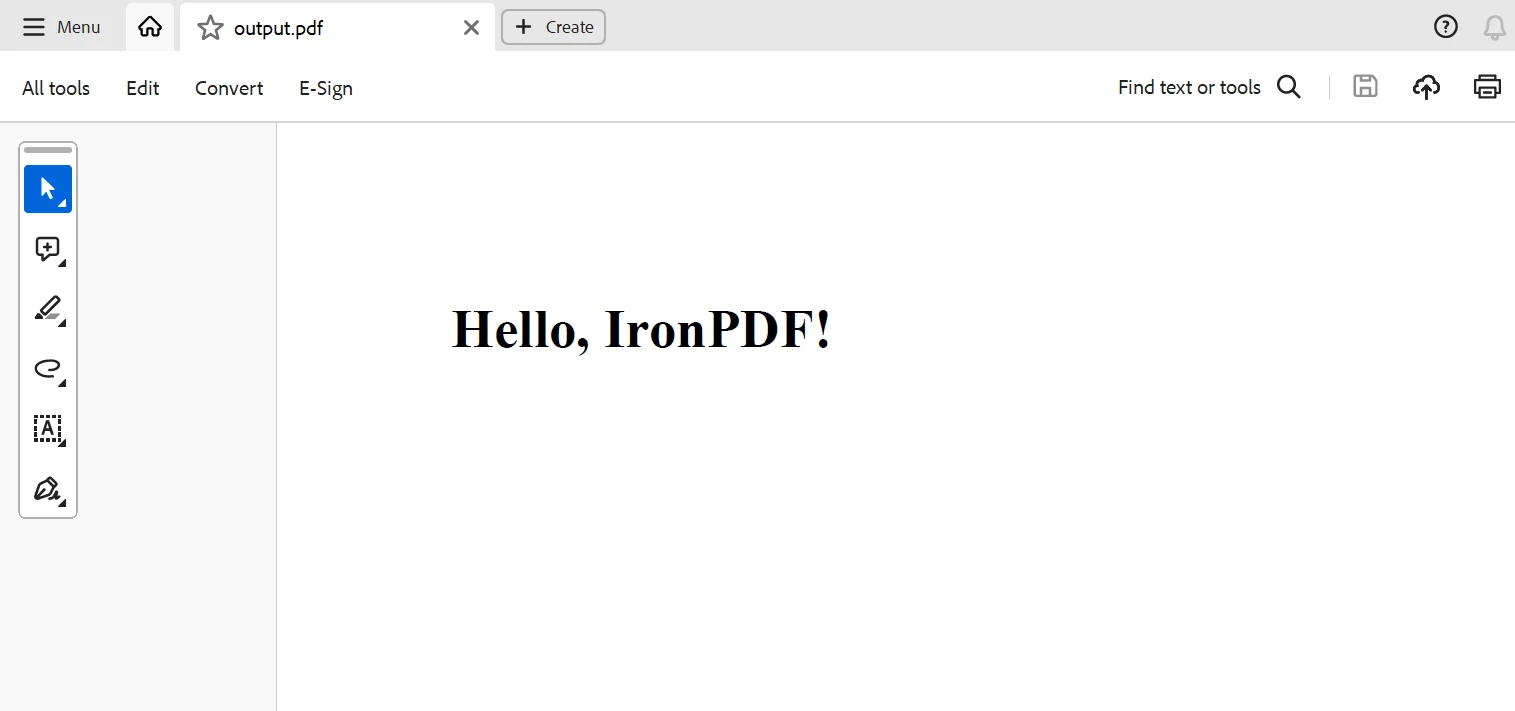

Scrapy project with IronPDF

To define your spider, create a new Python file (such as example.py) in the spider's directory of your Scrapy project (myproject/myproject/spiders). A code example of a basic spider that extracts quotes from a URL:

import scrapy

from IronPdf import *

class QuotesSpider(scrapy.Spider):

name = 'quotes'

# Web page link

start_urls = ['http://quotes.toscrape.com']

def parse(self, response):

quotes = []

for quote in response.css('div.quote'):

title = quote.css('span.text::text').get()

content = quote.css('span small.author::text').get()

quotes.append((title, content)) # Append quote to list

# Generate PDF document using IronPDF

renderer = ChromePdfRenderer()

pdf = renderer.RenderHtmlAsPdf(self.get_pdf_content(quotes))

pdf.SaveAs("quotes.pdf")

def get_pdf_content(self, quotes):

# Generate HTML content for PDF using extracted quotes

html_content = "<html><head><title>Quotes</title></head><body>"

for title, content in quotes:

html_content += f"<h2>{title}</h2><p>Author: {content}</p>"

html_content += "</body></html>"

return html_contentimport scrapy

from IronPdf import *

class QuotesSpider(scrapy.Spider):

name = 'quotes'

# Web page link

start_urls = ['http://quotes.toscrape.com']

def parse(self, response):

quotes = []

for quote in response.css('div.quote'):

title = quote.css('span.text::text').get()

content = quote.css('span small.author::text').get()

quotes.append((title, content)) # Append quote to list

# Generate PDF document using IronPDF

renderer = ChromePdfRenderer()

pdf = renderer.RenderHtmlAsPdf(self.get_pdf_content(quotes))

pdf.SaveAs("quotes.pdf")

def get_pdf_content(self, quotes):

# Generate HTML content for PDF using extracted quotes

html_content = "<html><head><title>Quotes</title></head><body>"

for title, content in quotes:

html_content += f"<h2>{title}</h2><p>Author: {content}</p>"

html_content += "</body></html>"

return html_contentIn the above code example of a Scrapy project with IronPDF, IronPDF is being used to create a PDF document using the data that has been extracted using Scrapy.

Here, the spider's parse method gathers quotes from the webpage and uses the get_pdf_content function to create the HTML content for the PDF file. This HTML material is subsequently rendered as a PDF document using IronPDF and saved as quotes.pdf.

Conclusion

To sum up, the combination of Scrapy and IronPDF offers developers a strong option to automate web scraping activities and produce PDF documents on the fly. IronPDF's flexible PDF production features together with Scrapy's powerful web crawling and scraping capabilities provide a smooth process for gathering structured data from any web page and turning the extracted data into professional-quality PDF reports, invoices, or documents.

Through the utilization of Scrapy Spider Python, developers may effectively navigate the intricacies of the internet, retrieve information from many sources, and arrange it in a systematic manner. Scrapy's flexible framework, asynchronous architecture, and support for an XPath and CSS selector provide it the flexibility and scalability required to manage a variety of web scraping activities.

A lifetime license is included with IronPDF, which is fairly priced when purchased in a bundle. Excellent value is provided by the package, which only costs $799 (a one-time purchase for several systems). Those with licenses have 24/7 access to online technical support. For further details on the fee, kindly go to the website. Visit this page to learn more about Iron Software's products.

자주 묻는 질문

스크랩을 PDF 생성 도구와 통합하려면 어떻게 해야 하나요?

먼저 스크랩을 사용하여 웹 사이트에서 구조화된 데이터를 추출한 다음 IronPDF를 사용하여 해당 데이터를 동적 PDF 문서로 변환함으로써 스크랩을 IronPDF와 같은 PDF 생성 도구와 통합할 수 있습니다.

데이터를 스크랩하여 PDF로 변환하는 가장 좋은 방법은 무엇인가요?

데이터를 스크랩하여 PDF로 변환하는 가장 좋은 방법은 스크랩을 사용하여 데이터를 효율적으로 추출하고, 추출된 콘텐츠에서 고품질 PDF를 생성하는 IronPDF를 사용하는 것입니다.

Python에서 HTML을 PDF로 변환하려면 어떻게 해야 하나요?

IronPDF는 .NET 라이브러리이지만 Python.NET과 같은 상호 운용성 솔루션을 통해 Python과 함께 사용하여 IronPDF의 변환 방법을 사용하여 HTML을 PDF로 변환할 수 있습니다.

웹 스크래핑에 스크랩을 사용하면 어떤 이점이 있나요?

스크랩은 비동기 처리, 강력한 XPath 및 CSS 선택기, 사용자 정의 가능한 미들웨어 등의 장점을 제공하여 복잡한 웹사이트에서 데이터를 추출하는 프로세스를 간소화합니다.

웹 데이터에서 PDF를 자동으로 생성할 수 있나요?

예, 데이터 추출을 위한 스크랩과 PDF 생성을 위한 IronPDF를 통합하여 웹 데이터에서 PDF를 자동으로 생성할 수 있으므로 스크랩부터 문서 생성까지 원활한 워크플로우가 가능합니다.

스크랩에서 미들웨어의 역할은 무엇인가요?

스크랩의 미들웨어를 사용하면 요청 및 응답 처리를 제어하고 사용자 지정할 수 있으므로 자동 URL 필터링 및 사용자 에이전트 로테이션과 같은 기능을 통해 스크래핑 효율성을 높일 수 있습니다.

스크랩에서 스파이더를 어떻게 정의하나요?

스크래피에서 스파이더를 정의하려면 프로젝트의 스파이더 디렉터리에 새 Python 파일을 만들고 데이터 추출을 처리하는 parse와 같은 메서드를 사용하여 scrapy.Spider 확장 클래스를 구현하세요.

IronPDF가 PDF 생성에 적합한 이유는 무엇인가요?

IronPDF는 HTML에서 PDF로의 변환, 동적 PDF 생성, 편집 및 조작을 위한 포괄적인 기능을 제공하여 다양한 문서 생성 요구에 다용도로 사용할 수 있으므로 PDF 생성에 적합한 선택입니다.

웹 데이터 추출 및 PDF 생성 기능을 향상시키려면 어떻게 해야 하나요?

효율적인 데이터 스크래핑을 위한 Scrapy와 추출된 데이터를 전문적인 형식의 PDF 문서로 변환하는 IronPDF를 사용하여 웹 데이터 추출 및 PDF 생성을 향상하세요.